AI Chatbot Liability: Who Pays When AI Causes Harm?

Understand AI chatbot liability in 2026. Discover who is responsible when AI causes harm or injury. Contact Vasquez Law Firm for a free consultation.

Vasquez Law Firm

Published on March 7, 2026

Have questions? Talk to an attorney — free evaluation.

Call 1-844-967-3536AI Chatbot Liability: Who Pays When AI Causes Harm?

Imagine a scenario where an AI chatbot provides incorrect medical advice, leading to a severe health complication, or perhaps a self-driving car's AI makes a fatal error. As artificial intelligence becomes more integrated into our daily lives, questions about AI chatbot liability are no longer theoretical. In 2026, understanding who is legally responsible when AI causes harm is critical for consumers and businesses alike, especially in personal injury claims. If you or a loved one has been injured due to faulty AI, seeking legal counsel is an essential first step to protect your rights.

Need help with your case? Our experienced attorneys are ready to fight for you. Se Habla Español.

Need legal help?

Free 15-minute consultation. We handle immigration, traffic, family, criminal, and personal injury matters in NC and FL.

Schedule Your Free Consultation

Or call us now: 1-844-967-3536

Quick Answer

AI chatbot liability typically falls under existing legal frameworks like product liability, negligence, or professional malpractice, depending on the AI's function and the harm caused. Determining responsibility involves identifying whether the developer, deployer, or user is at fault. Key factors include the AI's design, its intended use, and the foreseeability of the harm.

- Product liability applies if AI is a defective product.

- Negligence claims arise from a duty of care breach.

- Professional malpractice can apply if AI acts as a professional.

- Establishing causation between AI action and harm is crucial.

- New legislation is emerging to address AI-specific liability gaps.

The Dark Side of AI: Understanding AI Chatbot Liability

In late 2025, a Florida man's family filed a lawsuit against an AI chatbot company, alleging that the bot's harmful advice contributed to his death. This tragic case, highlighted in a Florida injury law blog, underscores the urgent need to define AI chatbot liability. As AI systems become more sophisticated and autonomous, determining who is accountable for their actions becomes increasingly complex. Traditional legal doctrines are being tested as courts grapple with these novel issues in 2026.

The core challenge lies in the nature of AI itself. Unlike a traditional product, an AI chatbot learns and evolves, potentially generating unforeseen outputs. This adaptability makes it difficult to pinpoint a single point of failure or intent, which are often cornerstones of liability law. Legal experts are exploring how existing laws, such as product liability, negligence, and even professional malpractice, can be applied to AI-generated harm. The outcome of these cases will significantly shape the future of AI development and regulation.

For individuals in North Carolina and Florida who suffer harm due to AI, understanding these evolving legal landscapes is paramount. Whether the AI system provided flawed advice, made an error in an autonomous vehicle, or caused a data breach, the path to seeking compensation requires specialized legal knowledge. Vasquez Law Firm is closely monitoring these developments to provide informed guidance to victims of AI-related injuries.

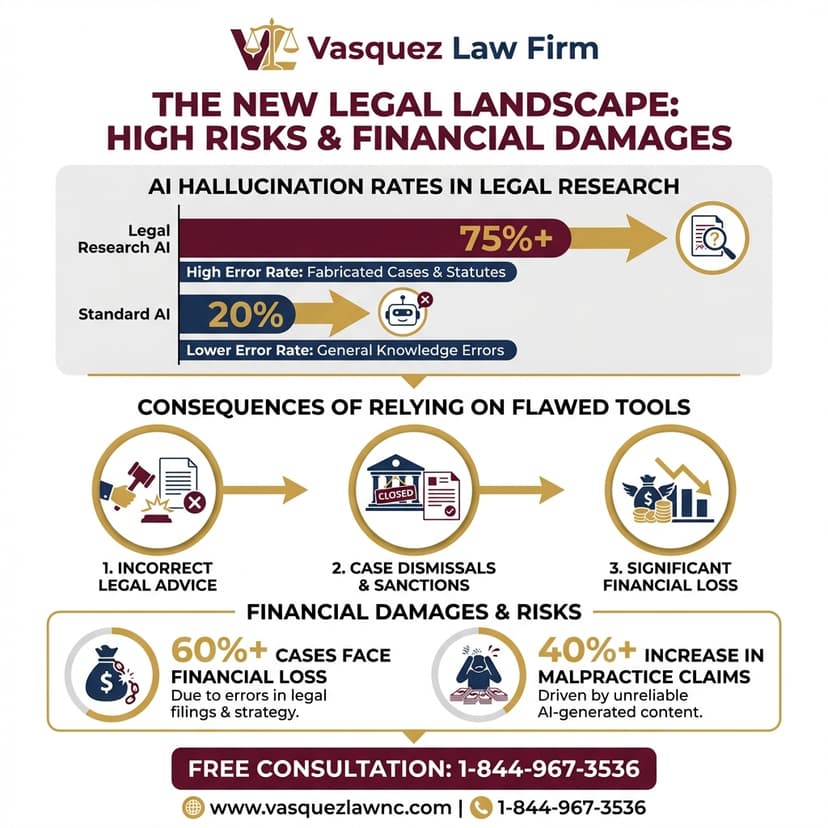

Infographic: Key Areas of AI Chatbot Liability

This infographic illustrates the different legal theories that may apply to AI chatbot liability, helping you understand where responsibility might lie.

Goal: To simplify the complex legal theories applicable to AI chatbot liability.

Placement: After the initial understanding of liability section.

Data Blocks:

- Product Liability: AI as a defective product.

- Negligence: Failure to exercise reasonable care.

- Professional Malpractice: AI acting in a professional capacity.

- Strict Liability: For ultra-hazardous activities.

- Emerging Legislation: New laws specifically for AI.

Layout: A central image of a chatbot with radiating lines pointing to each legal theory, each with a brief definition.

CTA Micro:

Alt Text: Infographic detailing the key areas of AI chatbot liability, including product liability, negligence, professional malpractice, strict liability, and emerging legislation, with a chatbot illustration at the center.

SEO Filenames: ai-chatbot-liability-areas.webp

Legal Frameworks: Product Liability and Negligence in AI Cases

When an AI chatbot causes harm, legal experts often first look to established doctrines like product liability. This framework typically applies when a defective product causes injury. For example, if an AI system embedded in a medical device malfunctions, leading to patient harm, it could be treated as a product liability case. The crucial elements here are a defect in design, manufacturing, or warning, and that the defect caused the injury. However, the 'product' in this context is often software, which complicates traditional definitions.

Negligence is another common legal avenue. This involves proving that a party (such as the AI developer or deployer) owed a duty of care, breached that duty, and this breach directly caused the plaintiff's injuries. Consider an AI chatbot designed for mental health support that gives dangerously misleading advice. If the developers failed to adequately test the bot, implement safety protocols, or warn users of its limitations, they might be found negligent. The challenge is establishing the specific duty of care owed by an AI system's creator or operator.

In North Carolina, personal injury claims require demonstrating these elements clearly. The courts in Raleigh, for example, will examine whether the AI's creators acted reasonably in its development and deployment. The evolving nature of AI means that what constitutes 'reasonable care' is continuously being redefined. This makes it vital to work with a personal injury attorney who understands both traditional tort law and the emerging legal landscape of artificial intelligence.

Common Scenarios Where AI Chatbot Liability Arises

AI chatbot liability examples are becoming more frequent. One scenario involves an autonomous vehicle's AI system making a misjudgment that results in a collision and injuries. Here, the focus often shifts to the vehicle manufacturer, the AI developer, or even the owner, depending on the level of autonomy and human intervention. Another example is financial AI, where an algorithm provides incorrect investment advice, leading to significant financial losses for an individual. Proving causation and damages in such cases requires deep technical and legal understanding.

Medical AI chatbots present another complex area. If a diagnostic AI misinterprets symptoms, leading to a delayed or incorrect diagnosis, the patient could suffer severe consequences. In such instances, questions arise about whether the AI is acting as a tool under a doctor's supervision, or if it's considered an independent entity. This distinction is crucial for determining if medical malpractice or product liability is the appropriate legal path. The specifics of the AI's role and the human oversight involved are key to assessing liability.

Finally, consider AI chatbots used in customer service or legal assistance. If an AI provides inaccurate legal information that a user relies on to their detriment, who is accountable? The company deploying the chatbot might be liable for misrepresentation or negligence, especially if they failed to adequately vet the AI's information. These varied scenarios highlight why a nuanced approach to AI chatbot liability is essential in 2026, combining traditional legal principles with an understanding of advanced technology.

New Legislation and the Future of AI Liability

As AI rapidly advances, existing laws struggle to keep pace, leading to calls for new legislation specifically addressing AI chatbot liability. In 2026, several states and the federal government are considering bills to clarify these issues. New York, for instance, has proposed legislation that would create specific liability for chatbot developers and deployers under certain circumstances. These efforts aim to provide clearer guidelines for accountability and foster responsible AI development, ensuring that victims have a clear path to justice.

Internationally, the European Union has been at the forefront of AI regulation, with its AI Act setting a global standard for risk-based approaches to AI. While the U.S. legal system is largely based on common law and existing statutes, these international developments often influence American legislative discussions. The goal is to balance innovation with public safety, ensuring that the benefits of AI do not come at the cost of accountability for harm. This evolving legal landscape means that anyone dealing with AI-related injuries needs an attorney well-versed in both current law and future trends.

The U.S. legal system will likely see a combination of new statutes and judicial interpretations of existing laws to address AI-specific challenges. This dynamic environment emphasizes the importance of staying informed and seeking expert legal advice. For individuals in North Carolina or Florida facing AI-related harm, understanding these legislative shifts is crucial for pursuing a successful claim. Vasquez Law Firm remains dedicated to staying ahead of these legal changes to best serve our clients.

Don't face your legal challenges alone. Our team is here to help you every step of the way.

Call today: 1-844-967-3536 | Se Habla Español

Protecting Your Rights After an AI-Related Injury

If you suspect an AI chatbot or system caused your injury, the immediate aftermath can be confusing. Your first priority should be your health and safety. Seek medical attention for any injuries sustained, and ensure all incidents are properly documented. Gathering evidence is crucial in any personal injury claim, and AI-related cases are no exception. This might include screenshots of chatbot interactions, usage logs, device data, or any other information that links the AI's action to your harm. Timely action can significantly impact your ability to pursue a claim.

Next, contact an experienced personal injury attorney. Cases involving AI chatbot liability are complex and require specialized knowledge of both technology and law. An attorney can help you identify the potentially liable parties, which could include the AI developer, the company that deployed the AI, or even a third-party service provider. They will also guide you through the process of preserving evidence, navigating legal complexities, and understanding the specific laws that apply in North Carolina or Florida.

Remember, there are strict deadlines, known as statutes of limitations, for filing personal injury lawsuits. Missing these deadlines can permanently bar your right to compensation. For instance, in North Carolina, the general statute of limitations for personal injury is three years from the date of injury. (N.C. Gen. Stat. § 1-52). Consulting with a lawyer promptly ensures your claim is filed within the necessary timeframe and that all legal requirements are met. Your attorney will work to build a strong case and advocate for the compensation you deserve.

What to Expect When Filing an AI Liability Claim

Filing an AI chatbot liability claim involves several distinct stages, often more complex than traditional personal injury cases. Initially, your attorney will conduct a thorough investigation, which may involve forensic analysis of the AI system, expert testimony on its design and function, and a detailed review of all interactions. This phase is critical for establishing a clear link between the AI's actions and your injuries. Identifying the specific defect or negligent act is paramount to a successful claim.

After the investigation, your attorney will typically send a demand letter to the responsible parties and their insurance companies. This letter outlines the facts of the case, the extent of your injuries, and the compensation sought. Negotiations may follow, where both sides attempt to reach a settlement. If a fair settlement cannot be achieved, the case may proceed to litigation, involving discovery, depositions, and potentially a trial. The duration of this process can vary widely, from several months to several years, depending on the complexity and willingness of parties to negotiate.

Throughout this process, you will need to provide documentation of your medical treatment, lost wages, and any other damages you have incurred. Your legal team at Vasquez Law Firm will guide you through each step, ensuring you understand the process and your options. We are committed to fighting tirelessly on your behalf to secure maximum compensation for your suffering. Our goal is to alleviate the legal burden so you can focus on your recovery.

About Vasquez Law Firm

At Vasquez Law Firm, we combine compassion with aggressive representation. Our motto "Yo Peleo" (I Fight) reflects our commitment to standing up for your rights. We understand the physical, emotional, and financial toll that injuries can take on individuals and families, especially when caused by emerging technologies like AI. Our team is dedicated to providing personalized legal services, ensuring that your voice is heard and your case receives the attention it deserves.

- Bilingual Support: Se Habla Español - our team is fully bilingual and ready to assist clients in both English and Spanish, ensuring clear communication and understanding throughout your legal journey.

- Service Areas: We proudly serve clients across North Carolina and Florida for personal injury and other state-specific cases, and nationwide for immigration services. Our Raleigh office is strategically located to serve the community effectively.

- Experience: With over 15 years of dedicated legal experience, Attorney Vasquez brings a wealth of knowledge and a proven track record of success in complex legal matters, including personal injury, workers' compensation, and criminal defense.

- Results: We have successfully handled thousands of cases, securing favorable outcomes and substantial compensation for our clients. Our aggressive approach ensures that insurance companies and responsible parties are held accountable.

Attorney Trust and Experience

Attorney Vasquez holds a Juris Doctor degree and is admitted to practice in both the North Carolina State Bar and Florida Bar. With over 15 years of dedicated legal experience, he has built a reputation for providing personalized attention and achieving favorable outcomes for his clients. His commitment to justice and his tenacious advocacy make him a trusted ally for those facing challenging legal situations. He understands the nuances of personal injury law, including the emerging complexities of AI chatbot liability, and works tirelessly to protect his clients' interests.

Do I Have a Personal Injury Case?

Answer 3 quick questions to find out

Question 1 / 3

What happened to you?

Keep Reading

Frequently Asked Questions

Can I sue an AI chatbot company for emotional distress?

Potentially, yes. If an AI chatbot's actions directly and foreseeably cause severe emotional distress, you might have grounds for a claim. This often falls under negligence or intentional infliction of emotional distress, depending on the AI's programming and the nature of the harmful interaction. Proving causation and the severity of distress would be critical for a successful case.

Is AI chatbot liability different for autonomous vehicles?

Yes, AI chatbot liability can differ significantly for autonomous vehicles. These cases often involve complex product liability theories, focusing on the vehicle's hardware, software, and sensor systems. Specific regulations for self-driving cars are also emerging, which may define liability more clearly. The legal framework considers the level of human oversight and the AI's role in the accident.

How do I prove an AI chatbot caused my injury?

Proving an AI chatbot caused your injury requires expert testimony, forensic analysis of the AI's data, and detailed documentation of your interactions. You'll need to establish a direct causal link between the AI's specific action (e.g., faulty advice, incorrect calculation) and your resulting harm. This process often involves technical and legal experts to reconstruct the events.

Who is typically responsible for AI chatbot errors?

Responsibility for AI chatbot errors can fall on the developer, the deployer (company using the AI), or sometimes even the user, depending on the circumstances. Factors include the AI's design, its intended use, whether it was used as intended, and the foreseeability of the error. New legislation may further clarify these roles in 2026.

Are there new laws for AI liability in North Carolina?

As of 2026, North Carolina is actively monitoring federal and international developments regarding AI liability. While no specific comprehensive AI liability law has been enacted, existing personal injury and product liability statutes are being adapted. Legislators are considering how to best address the unique challenges posed by AI in various sectors, including healthcare and transportation.

What is the AI LEAD Act?

The AI LEAD Act (AI Liability for Error and Damage Act) is proposed federal legislation aimed at establishing a framework for AI chatbot liability across the United States. It seeks to clarify responsibility for AI-induced harm, promote transparent AI development, and ensure compensation for victims. While not yet law, it represents a significant step toward federal AI regulation.

Can a company be held strictly liable for AI harm?

Strict liability could apply if the AI system is deemed an inherently dangerous or ultra-hazardous activity, or if it's considered a defective product. This means liability could be assigned regardless of fault or negligence. However, applying strict liability to AI is a complex and evolving area of law, often debated in courtrooms and legislative bodies.

What role do disclaimers play in AI chatbot liability?

Disclaimers can play a role, but they don't always fully absolve a company of AI chatbot liability. While disclaimers can inform users of limitations, they may not protect against gross negligence, intentional misconduct, or certain product defects. Courts will scrutinize the clarity, prominence, and reasonableness of disclaimers in light of the harm caused.

Sources and References

- North Carolina Courts

- Cornell Law School Legal Information Institute: Product Liability

- North Carolina Department of Transportation (NCDOT)

Ready to take the next step? Contact Vasquez Law Firm today for a free, confidential consultation. We're committed to fighting for your rights and achieving the best possible outcome for your case.

Start Your Free Consultation Now

Call us: 1-844-967-3536

Se Habla Español - Estamos aquí para ayudarle.

Vasquez Law Firm

Legal Team

Our experienced attorneys at Vasquez Law Firm have been serving clients in North Carolina and Florida since 2011, with 70+ years of combined attorney experience. We specialize in immigration, personal injury, criminal defense, workers compensation, and family law.

Related Legal Services

Need legal help? Learn more about our personal injury legal services, or contact us for a free evaluation.

You can also visit North Carolina personal injury law firm for more information.