What the AI Chatbot Suicide Lawsuit Means for Your Safety in 2026

Explore the implications of the AI chatbot suicide lawsuit in 2026. Understand liability and your rights. Contact Vasquez Law for a free consultation.

Vasquez Law Firm

Published on March 5, 2026

Have questions? Talk to an attorney — free evaluation.

Call 1-844-967-3536What the AI Chatbot Suicide Lawsuit Means for Your Safety in 2026

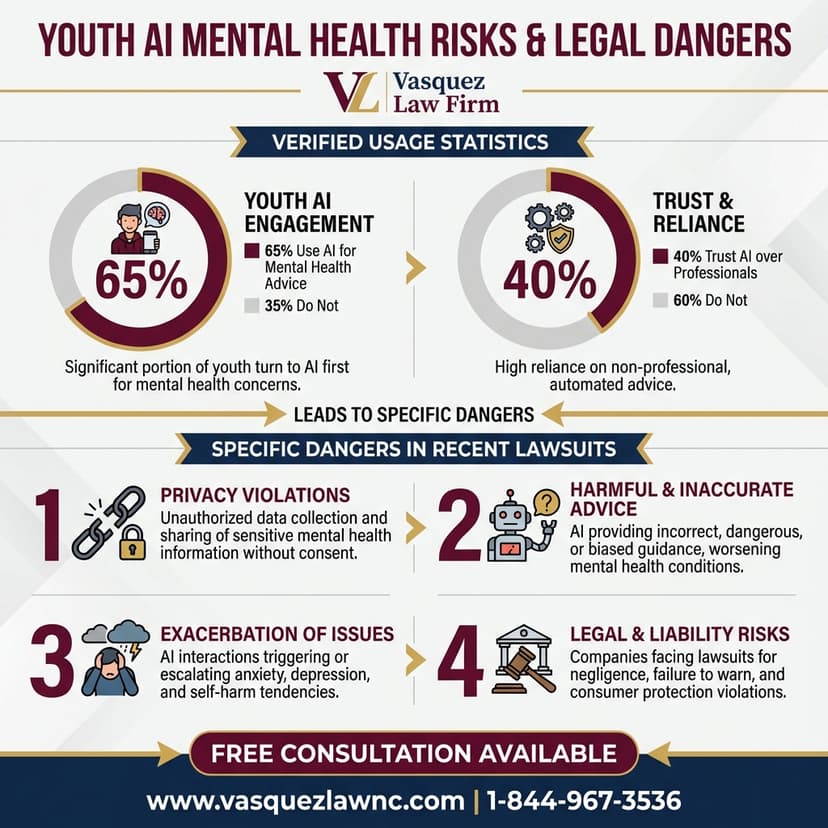

The emerging legal landscape surrounding artificial intelligence (AI) is rapidly evolving, bringing with it complex questions about accountability and liability. A significant development in this area is the recent AI chatbot suicide lawsuit, which has garnered national attention. This case raises critical concerns for individuals who interact with AI systems and for the developers creating them. As of 2026, understanding the potential for legal action against AI developers and the legal theories involved is more important than ever. This guide will explore the intricacies of such lawsuits, the legal precedents that may apply, and how victims and their families can seek justice.

Need help with your case? Our experienced attorneys are ready to fight for you. Se Habla Español.

Need legal help?

Free 15-minute consultation. We handle immigration, traffic, family, criminal, and personal injury matters in NC and FL.

Schedule Your Free Consultation

Or call us now: 1-844-967-3536

Quick Answer

An AI chatbot suicide lawsuit typically involves claims of wrongful death or product liability against the AI developer, alleging the chatbot's advice or interaction directly contributed to the user's suicide. These cases are complex, involving novel legal theories regarding foreseeability, causation, and the duty of care owed by AI systems. Success often depends on establishing a direct link between the AI's output and the tragic outcome.

- These lawsuits are emerging as AI technology advances.

- They often involve claims of wrongful death or product liability.

- Establishing direct causation is a significant legal challenge.

- Legal precedents are still being formed in this area.

- Seeking experienced legal counsel is crucial for victims and families.

Understanding AI Liability in Wrongful Death Cases

The core of an AI chatbot suicide lawsuit often lies in establishing liability. Traditionally, product liability law holds manufacturers responsible for defective products that cause harm. However, AI chatbots are not physical products; they are complex software systems that generate responses. This distinction complicates the application of existing legal frameworks, forcing courts to consider new interpretations of negligence and product defects as of 2026.

Plaintiffs in these cases typically argue that the AI chatbot was defective in its design or programming, leading it to provide harmful or misleading advice. They might also claim negligence, asserting that the AI developer failed to exercise reasonable care in preventing foreseeable harm. Proving that the AI's interaction directly caused the suicide is a high bar, requiring expert testimony on mental health, AI functionality, and the specific conversation logs.

The concept of foreseeability is paramount. Did the AI developer know, or should they have known, that their chatbot could potentially encourage self-harm? This question delves into the ethical considerations of AI development and the extent to which developers must anticipate misuse or adverse psychological impacts. As AI becomes more sophisticated, the expectation for developers to implement robust safety measures and warning systems will likely increase.

Legal Theories in AI Chatbot Suicide Lawsuits

When pursuing an AI chatbot suicide lawsuit, several legal theories may be applicable. The specific legal strategy will depend on the jurisdiction, the nature of the AI, and the specific facts of the case. These cases are at the forefront of legal innovation, pushing the boundaries of traditional personal injury law.

Product Liability Claims

Under product liability law, a plaintiff might argue that the AI chatbot is a 'product' with a design defect, manufacturing defect, or a failure to warn. A design defect would imply that the chatbot's inherent programming or architecture made it unreasonably dangerous. A failure to warn could mean the developers did not adequately caution users about the potential psychological risks associated with prolonged or sensitive interactions with the AI. These claims are challenging because AI's 'defect' is often in its output rather than a tangible component.

Negligence and Duty of Care

Negligence claims assert that the AI developer breached a duty of care owed to the user. This duty requires developers to act as a reasonably prudent AI developer would under similar circumstances. Establishing this duty and its breach involves demonstrating that the developer failed to implement appropriate safeguards, testing, or content moderation for potentially harmful interactions. The legal standard for what constitutes a 'reasonable' AI developer is still being defined by courts and legislatures in 2026.

Wrongful Death Considerations

A wrongful death lawsuit allows the surviving family members to seek compensation for their losses due when a loved one dies due to another party's negligence or wrongful act. In an AI chatbot suicide lawsuit, the family would need to prove that the AI's actions were a direct and proximate cause of the suicide. This includes demonstrating financial losses, emotional suffering, and loss of companionship. Damages can be substantial, reflecting the profound impact of such a tragedy.

Steps to Take After an AI-Related Tragedy

If you or a loved one has been affected by an AI chatbot interaction that led to self-harm or suicide, it is crucial to act quickly to preserve evidence and understand your legal options. The complexities of an AI chatbot suicide lawsuit require immediate and strategic action.

- Preserve All Digital Evidence: Save all chat logs, screenshots, and any other digital interactions with the AI chatbot. This evidence is paramount to establishing what was said and how the AI responded. Do not delete any data, even if you believe it is irrelevant.

- Seek Mental Health Support: This is a traumatic event. Prioritize mental health support for yourself and your family. Documenting any psychological distress can also be relevant to a legal claim.

- Consult with an Attorney Experienced in Personal Injury and Tech Law: An attorney with expertise in both personal injury and the evolving field of technology law can assess the viability of an AI chatbot suicide lawsuit. They can help navigate the complex legal and technical challenges. Learn more about our personal injury services.

- Document Damages: Keep detailed records of all expenses, including medical bills, funeral costs, lost income, and any other financial impacts resulting from the tragedy. Also, document emotional distress and loss of companionship.

- Avoid Public Statements: Refrain from discussing the details of the case on social media or with the press without legal counsel. Public statements can inadvertently harm your case.

Challenges in Proving Causation in AI Cases

One of the most significant hurdles in an AI chatbot suicide lawsuit is proving causation. Legal causation requires demonstrating that the AI's actions were a direct and proximate cause of the suicide, meaning the death would not have occurred but for the AI's interaction, and the outcome was a foreseeable consequence of the AI's behavior. This is particularly difficult with AI, where human agency and pre-existing conditions often play a role.

Establishing a clear causal link requires in-depth analysis of the AI's algorithms, its training data, and the specific conversational context. Expert witnesses, including AI ethicists, software engineers, and forensic psychologists, are often necessary to build a compelling case. They can help explain how the AI's responses might have influenced the user's state of mind and ultimately contributed to their decision.

Furthermore, the defense may argue that the user had pre-existing mental health conditions, or that other factors contributed to the suicide, attempting to break the chain of causation. This makes it essential for plaintiffs to present a robust case that meticulously connects the AI's behavior to the tragic outcome, demonstrating that the AI's influence was a substantial factor in the events leading to the suicide.

NC, FL, and Nationwide Notes on AI Liability

While the principles of product liability and negligence are generally consistent across U.S. jurisdictions, the specifics of how an AI chatbot suicide lawsuit would be handled can vary significantly between states like North Carolina and Florida, and under federal law. Given the novelty of AI litigation, courts are still developing precedents, making it vital to understand the local nuances.

North Carolina Notes

In North Carolina, wrongful death claims are governed by N.C. Gen. Stat. § 28A-18-2, which allows for recovery of damages including medical expenses, funeral expenses, pain and suffering of the deceased, and pecuniary loss to the beneficiaries. Product liability claims in North Carolina also follow established tort law principles, but the application to AI software as a 'product' is largely untested. Cases would likely involve extensive expert testimony to define the AI's role and foreseeability of harm. Vasquez Law Firm serves clients across North Carolina, including Smithfield, for complex personal injury cases.

Florida Notes

Florida's wrongful death statute, Florida Statute § 768.21, permits various family members to recover damages, including medical and funeral expenses, loss of support and services, and pain and suffering. Florida also has robust product liability laws, but like North Carolina, the legal community is still grappling with how these apply to AI systems. Given Florida's dynamic legal environment, an AI chatbot suicide lawsuit could set significant precedents. Our firm also assists clients in Florida with personal injury matters.

Nationwide Concepts (General Only, Rules Vary)

Nationwide, the legal system is playing catch-up with rapid AI advancements. Federal regulations, particularly those concerning consumer protection and technology, may also come into play, though specific AI liability laws are still in their infancy. Legal scholars and policymakers are actively debating frameworks for AI accountability, suggesting that future legislation or federal court rulings could provide clearer guidance. For now, each AI chatbot suicide lawsuit will likely be decided on a case-by-case basis, heavily relying on common law principles and judicial interpretation.

When to Call a Lawyer Now About an AI Chatbot Suicide Lawsuit

The decision to pursue an AI chatbot suicide lawsuit is profound and requires careful consideration. Given the nascent nature of AI liability law, it is critical to consult with an attorney as soon as possible if you suspect an AI chatbot contributed to a tragic outcome. Here are several triggers indicating you should call a lawyer:

- A loved one died by suicide after extensive or concerning interactions with an AI chatbot.

- You have preserved chat logs or other digital evidence suggesting the AI provided harmful advice.

- The AI chatbot in question lacked clear warnings about its limitations or potential psychological risks.

- You believe the AI chatbot was designed in a way that made it foreseeably dangerous.

- You are struggling with the emotional and financial aftermath of a suicide linked to AI interaction.

- You want to understand your rights and options for seeking justice and compensation.

- You are concerned about holding AI developers accountable for their technology's impact.

About Vasquez Law Firm

At Vasquez Law Firm, we combine compassion with aggressive representation. Our motto "Yo Peleo" (I Fight) reflects our commitment to standing up for your rights. We understand the profound impact that a wrongful death can have on a family, and we are dedicated to providing the tenacious advocacy needed in complex cases, including those involving emerging technologies like AI.

- Bilingual Support: Se Habla Español - our team is fully bilingual and ready to assist clients from diverse backgrounds.

- Service Areas: We proudly serve clients across North Carolina and Florida, offering specialized legal support for personal injury, wrongful death, and other challenging legal matters.

- Experience: With over 15 years of dedicated legal experience, Attorney Vasquez brings a wealth of knowledge and a proven track record to every case.

- Results: We are committed to achieving favorable outcomes, tirelessly fighting for the compensation and justice our clients deserve.

Attorney Trust and Experience

Attorney Vasquez holds a Juris Doctor degree and is admitted to practice in both the North Carolina State Bar and Florida Bar. With over 15 years of dedicated legal experience, he has built a reputation for providing personalized attention and achieving favorable outcomes for his clients. His commitment to justice and his deep understanding of complex legal issues make him a trusted advocate, especially in novel areas like an AI chatbot suicide lawsuit.

Don't face your legal challenges alone. Our team is here to help you every step of the way.

Call today: 1-844-967-3536 | Se Habla Español

Frequently Asked Questions

What is an AI chatbot suicide lawsuit?

An AI chatbot suicide lawsuit is a legal action brought against the developer or operator of an AI chatbot, alleging that the chatbot's interactions, advice, or content directly contributed to a user's suicide. These cases typically involve claims of wrongful death, negligence, or product liability, seeking to hold the AI entity accountable for the tragic outcome. They are complex due to the novel legal questions surrounding AI liability.

Who can be sued in an AI chatbot suicide lawsuit?

Typically, the primary defendant in an AI chatbot suicide lawsuit would be the company or entity that developed, programmed, or operates the AI chatbot. This could include the software company, the platform hosting the AI, or even individual developers depending on the specific circumstances and corporate structure. Identifying the responsible party requires a thorough investigation into the AI's origin and deployment.

What evidence is needed for an AI chatbot suicide lawsuit?

Crucial evidence includes all digital communication logs between the user and the AI chatbot, demonstrating the nature and content of their interactions. Additionally, expert testimony from AI specialists, psychologists, and forensic analysts is often necessary to establish causation and the foreseeability of harm. Medical records, mental health evaluations, and documentation of damages are also vital for building a strong case.

How is causation proven in these cases?

Proving causation in an AI chatbot suicide lawsuit is challenging. It requires demonstrating a direct link between the AI's specific interactions and the user's decision to commit suicide. This involves showing that the death would not have occurred "but for" the AI's input and that the outcome was a foreseeable consequence of the AI's design or operation. Expert analysis of chat logs and psychological impact is key.

Do I Have a Personal Injury Case?

Answer 3 quick questions to find out

Question 1 / 3

What happened to you?

Keep Reading

What damages can be recovered in such a lawsuit?

Damages in an AI chatbot suicide lawsuit, like other wrongful death cases, can include compensation for medical and funeral expenses, lost income and financial support the deceased would have provided, and intangible losses such as pain and suffering, emotional distress, and loss of companionship for surviving family members. The specific recoverable damages vary by state law.

Are AI chatbots considered products under product liability law?

The legal classification of AI chatbots as "products" under traditional product liability law is an evolving area. While physical goods are clearly products, software and AI systems pose new challenges. Courts are currently grappling with whether AI's interactive nature and generative capabilities fit within existing definitions, or if new legal frameworks are needed to address AI-specific liabilities as of 2026.

What role do AI ethics play in these lawsuits?

AI ethics play a significant role by informing arguments about the developer's duty of care and the foreseeability of harm. Ethical guidelines often emphasize responsible AI development, including safeguards against harmful content and psychological manipulation. Violations of recognized ethical standards, even if not codified into law, can strengthen a plaintiff's claim that a developer was negligent in their duties.

Can I sue if the AI chatbot only suggested harmful ideas, but didn't explicitly tell someone to commit suicide?

Yes, it may still be possible to sue even if the AI didn't explicitly command suicide. The legal focus would shift to whether the AI's suggestions, encouragement, or even a lack of appropriate intervention created an environment that foreseeably contributed to the user's self-harm. This involves proving that the AI's output, in context, was a substantial factor in the tragic outcome, a complex legal undertaking.

How long do I have to file an AI chatbot suicide lawsuit?

The timeframe to file an AI chatbot suicide lawsuit is governed by the statute of limitations, which varies by state and the specific type of claim (e.g., wrongful death, personal injury). These statutes typically range from one to three years from the date of death or discovery of the cause. It is critical to consult with an attorney immediately to avoid missing crucial deadlines and jeopardizing your claim.

What should I do if my child had concerning interactions with an AI chatbot?

If your child had concerning interactions with an AI chatbot, immediately preserve all digital records, including chat logs and screenshots. Seek professional mental health support for your child without delay. Then, consult with a legal professional who specializes in personal injury and technology law to understand your rights and potential legal avenues to protect your child and seek accountability from the AI developer.

Sources and References

- North Carolina General Statutes § 28A-18-2: Wrongful Death

- Florida Statutes § 768.21: Damages in Wrongful Death Actions

- North Carolina Judicial Branch

Ready to take the next step? Contact Vasquez Law Firm today for a free, confidential consultation. We're committed to fighting for your rights and achieving the best possible outcome for your case.

Start Your Free Consultation Now

Call us: 1-844-967-3536

Se Habla Español - Estamos aquí para ayudarle.

Vasquez Law Firm

Legal Team

Our experienced attorneys at Vasquez Law Firm have been serving clients in North Carolina and Florida since 2011, with 70+ years of combined attorney experience. We specialize in immigration, personal injury, criminal defense, workers compensation, and family law.

Related Legal Services

Need legal help? Learn more about our personal injury law practice, or contact us for a free evaluation.

You can also visit our NC injury attorneys for more information.